Tuesday, December 18, 2007

How Google Tests Results Quality

Technology Review interviewed Google’s director of research, Peter Norvig, asking him how Google tries to find out about the quality of their search results. Peter’s answer:

We test it in lots of ways. At the grossest level, we track what users are clicking on. If they click on the number-one result, and then they’re done, that probably means they got what they wanted. If they’re scrolling down, page after page, and reformulating the query, then we know the results aren’t what they wanted. Another way we do it is to randomly select specific queries and hire people to say how good our results are. These are just contractors that we hire who give their judgment. We train them on how to identify spam and other bad sites, and then we record their judgments and track against that. It’s more of a gold standard because it’s someone giving a real opinion, but of course, since there’s a human in the loop, we can’t afford to do as much of it.

Peter also describes how Google sometimes invites people over into their labs or visits them at their homes to observe them searching, as it provides information on what people may find difficult with search.

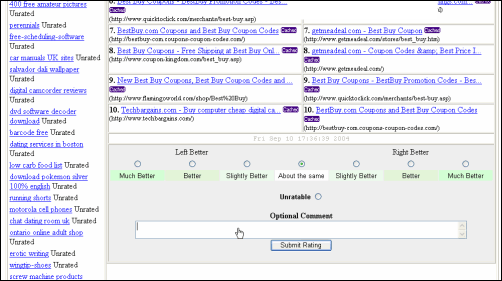

On a related note, 2 years ago the Search Bistro blog got hold of information showing Google’s evaluation lab (that’s the paid Human Evaluation program to judge on search results, not to be confused with the non-paid Trusted Tester program for yet to be released services).

[Thanks Pd! Screenshot by Search Bistro.]

>> More posts

Advertisement

This site unofficially covers Google™ and more with some rights reserved. Join our forum!